Introduction

Retrieval-Augmented Generation (RAG) systems combine the power of information retrieval with large language models (LLMs) to produce grounded, context-aware responses. At its core, every RAG pipeline follows a straightforward principle:

- Retrieval: Identify and fetch relevant documents or chunks from a knowledge base using embeddings and vector search.

- Generation: Leverage an LLM to synthesize an answer based solely on the retrieved information, minimizing reliance on the model’s parametric knowledge.

While this architecture promises reduced hallucinations and enhanced accuracy, real-world implementations often falter—leading to fabricated details or overlooked critical information. These issues can stem from myriad stages, including data ingestion (e.g., poor chunking), embedding models (e.g., weak semantic capture), retrieval strategies (e.g., suboptimal top-k or reranking), system prompts (e.g., ambiguous instructions), and context window limitations (e.g., truncation under high load). Debugging and fine-tuning these elements manually can be time-intensive and error-prone, potentially delaying deployment and eroding user trust.

This is where DeepEval enters the picture. As a robust open-source evaluation framework, DeepEval empowers developers to systematically assess RAG performance using LLM-as-a-judge metrics. It transforms subjective “gut checks” into quantifiable, repeatable benchmarks, enabling iterative improvements across your pipeline. In this post, we’ll explore DeepEval’s mechanics, dive into its single-turn metrics (ideal for baseline RAG evaluation), and provide practical tools like a debugging cheat sheet. We’ll also touch on extensions to multi-turn and agentic scenarios, with guidance on scaling beyond basic single-turn setups.

What is DeepEval?

DeepEval is a powerful open-source framework designed specifically for evaluating LLM applications, with a strong emphasis on RAG pipelines. It leverages over 50 out-of-the-box metrics—covering faithfulness, relevancy, contextual precision, and more—to ensure your AI outputs are reliable, accurate, and free from common pitfalls like hallucinations. By employing LLMs as impartial judges (e.g., GPT-4o or Claude 3), DeepEval delivers nuanced, human-like assessments without requiring extensive custom coding. Whether you’re prototyping a simple Q&A bot or refining a production-grade knowledge base, it integrates seamlessly with frameworks like LangChain or LlamaIndex, supporting local runs or cloud-scale evaluations.

How Does It Work?

DeepEval operates much like unit testing for traditional software: For each user query, it constructs an LLMTestCase object, executes a suite of selected metrics, and outputs deterministic, repeatable results in structured JSON. This allows you to track incremental gains—such as tweaking a prompt for better adherence or swapping rerankers for improved precision—while benchmarking against baselines.

A cornerstone of effective testing is curating a “golden dataset”: a curated collection of high-quality test cases you deem 100% trustworthy. These serve as your ground-truth benchmarks, typically comprising 50–500 examples drawn from your domain. Each test case includes up to five key fields (with input and actual_output mandatory):

| Field | What You Put There | Why It Matters for Metrics |

|---|---|---|

| input | The user question (e.g., “What is the current policy in 2025?”) | Required for every metric; defines the query context. |

| actual_output | What your RAG system actually produced. | Required; this is the output under scrutiny by all metrics. |

| expected_output | The ideal, human-crafted reference answer. | Powers metrics like G-Eval, Answer Correctness, and Summarization for semantic alignment. |

| retrieval_context | The list of chunks your retriever actually fetched. | Essential for RAG-specific metrics (e.g., Contextual Precision/Recall, Faithfulness). |

| context (alias) | Ground-truth chunks that should have been retrieved. | Computes recall and precision by highlighting retrieval gaps. |

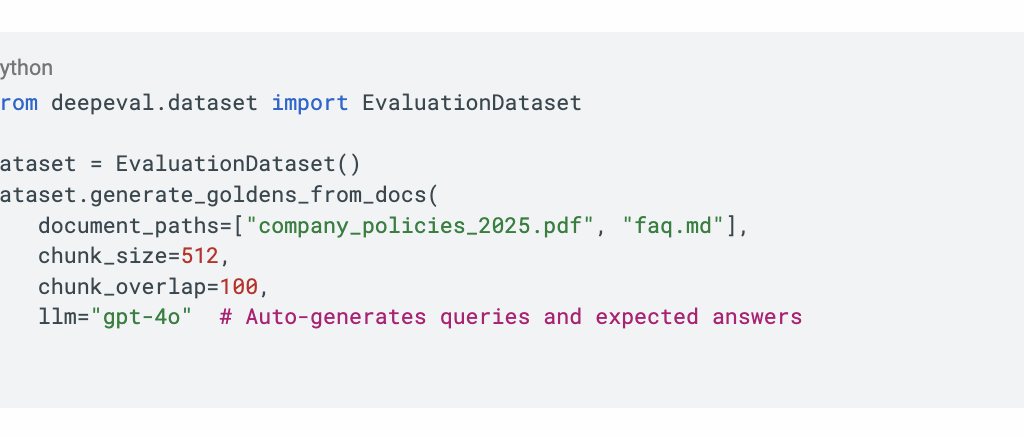

To generate a golden dataset quickly, DeepEval includes built-in tools:

This can yield 100–500 cases in minutes, ready for batch evaluation.

Single-Turn vs. Multi-Turn and Agentic Metrics: A Quick Primer

Before diving into specifics, it’s worth contextualizing: DeepEval’s metrics span application types, but this post focuses on single-turn evaluation—the foundational layer for standard RAG pipelines handling isolated queries (e.g., a one-off FAQ response). Single-turn metrics like those below excel at isolating retrieval and generation flaws in isolation.

For multi-turn scenarios (e.g., conversational chatbots), DeepEval shifts to ConversationalTestCase, enabling metrics like Contextual Relevancy to assess dialogue coherence across exchanges. This catches issues like context drift or forgotten prior turns.

In agentic setups (e.g., RAG with tool-calling for dynamic retrieval or multi-step reasoning), metrics such as Task Completion and Tool Correctness evaluate end-to-end workflows. Task Completion, for instance, uses LLM tracing to score if the agent fully resolves the inferred goal, while Tool Correctness verifies argument accuracy in calls. These build on single-turn foundations but require @observe decorators for tracing. If your RAG evolves toward agents, start with single-turn baselines before layering in these—DeepEval’s modularity makes the transition seamless.

Key Metrics: Evaluating Retrieval and Generation

DeepEval’s metrics are grouped by pipeline stage, allowing targeted diagnostics. We can probe the retrieval phase with Contextual Precision, Contextual Recall, and Contextual Relevancy, or the generation phase via Answer Relevancy and Faithfulness. These not only quantify performance but illuminate failure modes, guiding refinements like prompt tweaks or embedding upgrades. Below, we break them down.

Evaluating Retrieval

Contextual Precision Metric

What it tests: The quality of your ranking (vector search + reranker). It answers the question: “Are the best, most relevant chunks actually appearing at the top of the list you feed to the LLM?”

DeepEval sends every chunk from your retrieval_context (in the exact order your system returned them) to an LLM judge that labels each one as relevant or irrelevant. It then applies a ranked weighting penalty—an irrelevant chunk in position 1 destroys the score far more than one in position 8.

Low score signals: Missing or weak reranker, bad query rewriting, or poor cross-encoder.

Typical JSON reason: “Node at rank 1 is irrelevant to the question” or “Relevant information is buried at ranks 6–9.”

Target: ≥ 0.85 (world-class setups with good rerankers routinely hit 0.92–0.97).

Contextual Recall Metric

What it tests: The raw retrieval power of your embedding model + chunking strategy. It checks whether every single piece of ground-truth information needed to answer the question was actually retrieved at all (any rank).

DeepEval compares your retrieved chunks against the golden context field you provided. An LLM judge extracts required facts from the gold context and verifies if at least one retrieved chunk covers each fact.

Low score signals: Weak embeddings, chunks too small, zero overlap, top-k too low, or domain drift.

Typical JSON reason: “Expected node containing the 2025 pricing table was never retrieved” or “Missing definition of quantum entanglement.”

Target: ≥ 0.90—this is usually the hardest metric to push above 0.95 without fine-tuned embeddings and smart chunking.

Contextual Relevancy Metric

What it tests: Overall noise level in your retrieved set (ignoring order). It’s your early-warning system for chunk size and top-k tuning before you even add a reranker.

Each retrieved chunk is judged individually for minimal relevance; the final score is simply the fraction of chunks that are at least somewhat helpful.

Low score signals: Chunks too large (pulling in unrelated paragraphs), top-k set way too high, or missing basic metadata/date filters.

Typical JSON reason: “4 out of 10 retrieved nodes are generic boilerplate unrelated to the query.”

Target: ≥ 0.80 during early prototyping, ≥ 0.90 once reranking is in place.

Evaluating Generation

Answer Relevancy Metric

What it tests: How well your prompt template keeps the LLM focused on the user’s actual question instead of going off on tangents or adding unsolicited commentary.

The LLM judge scores how directly and completely the generated answer addresses the input, penalizing fluff, premature conclusions, or topic drift.

Low score signals: Prompt is too open-ended, missing “answer concisely” instructions, or retrieval context is noisy (forcing the model to hedge).

Typical JSON reason: “Response contains lengthy background on company history unrelated to the refund question.”

Target: ≥ 0.85—easy to push to 0.95+ with tight prompt engineering.

Faithfulness Metric

What it tests: Pure anti-hallucination guard—does every single claim in the final answer appear (verbatim or paraphrased) in the retrieved context you gave the LLM?

DeepEval breaks the actual_output into individual claims, then checks each one against the entire retrieval_context using an LLM judge. Even one unsupported claim tanks the score.

Low score signals: Prompt doesn’t forbid external knowledge, lost-in-the-middle problem, or the context itself inventing plausible-sounding details.

Typical JSON reason: “Claim ‘full refund within 60 days’ is not supported by any retrieved chunk (context only mentions 30 days).”

Target: ≥ 0.90 in production (many teams enforce ≥ 0.95 with strict prompts and reranking). This is usually the metric stakeholders care about most.

A Debugging Cheat Sheet: Root Causes and Fixes

When metrics flag issues, this cheat sheet maps symptoms to pipeline stages, DeepEval signals, and targeted remedies. Use it post-evaluation to prioritize fixes.

| Stage | # | Root Cause | Typical Symptom | What DeepEval Will Tell You | Fast Fix / Experiment to Try |

|---|---|---|---|---|---|

| Data Ingestion & Chunking | 1 | Chunks too small | Important fact split across two chunks | Contextual Recall ↓ Reason: “Expected node X was never retrieved” | Switch to semantic chunking + 15–25% overlap |

| 2 | Chunks too large / noisy | Irrelevant sentences dilute similarity | Contextual Precision ↓ + Faithfulness ↓ | Target 300–600 tokens per chunk | |

| 3 | Zero overlap | Facts on chunk boundaries disappear | Recall crashes on edge-case questions | Add 100–200 token overlap | |

| 4 | Bad splits (mid-sentence, tables, lists) | Embeddings lose meaning | Both Precision & Recall suffer | Use LlamaIndex SentenceSplitter or LangChain RecursiveCharacterSplitter with better separators | |

| Embedding Model | 5 | Weak or outdated embedder | Synonyms / domain terms not close in vector space | Recall < 0.6 on technical queries | Upgrade to voyage-large-2, bge-m3, e5-large, or text-embedding-3-large |

| 6 | Query vs document style mismatch | Natural language query vs bullet-point / legal chunks | Precision & Recall drop | Use asymmetric models (e.g., e5, bge) or HyDE | |

| 7 | Multilingual or code-mixed data | English-only embedder fails | Recall near zero on non-English queries | Switch to multilingual models (bge-m3, e5-mistral) | |

| Retrieval Strategy | 8 | k too low | Relevant chunk is #6 but you only take top-5 | Contextual Recall ↓ | Retrieve k=20–30 → rerank to final 5–8 |

| 9 | k too high | Too much noise → LLM gets confused | Faithfulness ↓ | Same as above: always rerank | |

| 10 | No reranking | Top result is only marginally relevant | Precision < 0.7, Faithfulness < 0.7 | Add Cohere Rerank, bge-reranker, FlashRank, or Jina Reranker | |

| 11 | No diversity (duplicate chunks) | Same info repeated, still missing the key one | Recall stays low despite high k | Enable MMR (Maximal Marginal Relevance) or deduplication | |

| Query Understanding | 12 | Vague or ambiguous user query | Generic chunks retrieved | Recall very low | Query rewriting LLM step before retrieval |

| 13 | Multi-hop / comparison questions | Single-round retrieval can’t answer | Recall tanks on “Compare A vs B” | Multi-query retriever, query decomposition, or iterative retrieval | |

| Prompt & Generation | 14 | Prompt doesn’t forbid external knowledge | LLM happily adds its own facts | Faithfulness 0.3–0.6 Reason: “Claim X not present in context” | Strict prompt: “Answer ONLY using the provided context. If unsure, say I don’t know.” |

| 15 | Prompt encourages long answers | More words = more chance to hallucinate | Faithfulness drops as answer length grows | Add “Answer concisely” or token-limit the output | |

| 16 | Lost-in-the-middle problem | LLM ignores chunks in the center of long context | Faithfulness low even when info is technically there | Put highest-scored (reranked) chunks first or use newer long-context models | |

| Context Window | 17 | Total tokens exceed model limit | Chunks silently truncated | Sudden Recall/Faithfulness drop on complex queries | Use longer-context models or summarize chunks before stuffing |

| Knowledge Freshness | 18 | Outdated documents in vector store | Retrieves old versions → appears to hallucinate current facts | Users complain “the info is wrong” | Automated re-indexing pipeline + document versioning |

Conclusion: Elevate Your RAG with Data-Driven Iteration

In summary, DeepEval demystifies RAG evaluation by providing precise, actionable insights into retrieval and generation—starting with single-turn metrics as your bedrock. By integrating golden datasets and targeted benchmarks, you can systematically address hallucinations and gaps, fostering a pipeline that’s not just functional but production-resilient. As your system scales to multi-turn dialogues or agentic flows, DeepEval’s extensibility ensures continued reliability, with metrics like Task Completion bridging the gap.

To get started, install via pip install deepeval and run your first eval suite today. For advanced integrations, explore the DeepEval GitHub repository or join their community Discord for tailored advice. Rigorous testing isn’t an overhead—it’s the accelerator that turns promising prototypes into trusted AI solutions. What RAG challenge will you tackle next?